Hi,

I was a digital artist across multiple disciplines and now I’m programming ways to make the tools better for artists, and to reclaim our power back off of the AI tech bros.

But here on the forum you may remember me from other pro artist, anti AI conversations such as:

If you read through the thread, you have some important takeaways:

YES: Nightshade CAN run on Linux, but*

-They offer no native solution and so if you’re clever you can emulate it, which is slower than native.

-You may find yourself unable to set it up for one reason or another.

-And even then there’s no guarantee that GPU will work, and CPU is much slower

And on top of this, Nightshade doesn’t align with the libre movement and quite frankly may not stack up next to what the community can get together on.

The goal of this proposed Add-on:

Using Psyker-Team’s ‘Mist version 2’

In combination with ACLY’s AI add-on:

Integrate training-resistant “camouflage” based perturbation directly inside Krita; so that an artist may protect their work before, just about before it even leaves the app. Keep creative control, reduce training value to scrapers, stay local and open and reduce the threat of scrapping even in your local files.

You could paint an artwork, then start the poison process, go take a walk around the block and by the time you’re back, your artwork is has been AI poisoned, without the original having left Krita for longer than what’s necessary to poison before it’s quickly placed back in the document.

Then you can paint it out where you want to have less of the visual disruption, and have completely control over the over strength.

Where it stands now

• The core technique has been validated on Linux using the MIST CLI only (local runs, no uploads).

• It works with models artists already have through ACLY/ComfyUI.

• Visual result is a subtle shimmer at pixel-peeping range; normal viewing remains faithful. Strength will be tunable. Tests reveal that minimal pixel difference has been fairly traded with

• Both GPU and CPU work are supported.

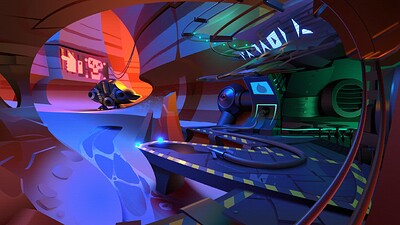

Here’s a banner with my original artwork in the background:

And here is the same banner processed by mist:

From a distance it looks ok, upclose it’s to be honest a bit of an acid trip to look at… but as psyker-team improves mist and as we find and provide additional controls and model versions; it’s going to become harder to notice the patterns and harder still for data thieves to easily scrape our portfolios.

I did perform a VAE latency test to determine the factor of how differently the AI saw the image compared to how familiar the image was to the human eye:

- VAE-latent cosine: 0.820 → latent shift ≈ 0.18 (1 − 0.82).

That’s the “poison factor” under an SD-1.5 VAE: ~18% move in feature space. - Pixel SSIM: 0.948 → high visual fidelity (looks close to the original to humans).

Keep in mind… that as with Nightshade, AI detectors WILL flag the art as AI generated, but it’s preferable to a data scrapper seeing your original hand drawn painting as a yummy training snack.

If you’re applying for a job, you can always promise you’ll show your original art in person.

Also I am using this example as a one time only example to show the difference between the original and the misted image. I would advise against posting both versions of your work online as it is actually used by AI engineers to create poison antidotes. As we layer on different models and improve the algorithms for poising data, it’s going to become more important not to reveal our hands. I’m just explaining this so you can understand roughly what goes into this and why it works.

Same reason you keep your encryption keys to yourself ![]()

What I’d like to build next:

• A prototype that plugs into ACLY’s AI Gen (ComfyUI-based) and exposes the camouflage from Krita.

• Reason: ACLY/ComfyUI already organizes the relevant models/tools, so artists can see and control what’s happening instead of a black box.

• Design target: lives naturally in Krita (e.g., Filters), with sensible defaults and advanced controls (seeds, tiling, model mix, strength) for unique “poison recipes.”

Why this approach? Why not emulate Nightshade or running with Mac or Windows? Or Glaze?

• Local and secure. Unlike WebGlaze, this never leaves your computer, unlike Nightshade and glaze, this ONLY leaves krita temporally to be processed and then resides only in your KRA file.

• Opensource: Easily change it to become more effective, it will grow faster with more hands on.

• Open and customisation: more varied camouflage in the wild is harder to counter than a single closed pipeline. This complements, not replaces, other tools.

But it’s not a silver bullet; it raises cost and reduces fidelity for would-be trainers while keeping you in control.

Values

• Linux-friendly, privacy-respecting, no vendor lock-in.

• Works with the model sets you already have via ACLY/ComfyUI.

• Free-as-in-freedom so the community can audit, improve, and evolve it.

Support

If you want this integrated into Krita with a clean UX and docs, contributions help me spend time building instead of unrelated gigs. Even small donations keep the work moving. I’ll share some links.

If you DO want to help me financially with this goal, I do recommend using ‘Buy Me a Coffee’ because you can message me and request for me to focus on this bridge between Krita and Mist.

Thanks for reading. Signal-boosts help—especially from artists who want a local, open alternative that keeps pace with AI training tactics. So please be sure to share this with your friends.