Until now there was kind of a loophole in the forum rules (or more how they were interpreted) about plugins and tools with AI integration. While we have a strict rule against AI content there was always the opinion that anyone can make any plugin they want. There are no limits on what you can do with Python in Krita other than the ones imposed by the lack of API functions. Krita still does not impose any rules for what plugins you can create or use, it wouldn’t make sense anyway. However, that doesn’t mean they must be allowed on this forum.

Since Krita’s core is AI free, and plans to stay that way even to the point of not allowing AI code, it only feels natural to close this loophole and make clear that plugins and tools that integrate, connect to AI services or are AI themselves will not be tolerated anymore. There will be made no difference between generative or other AI tools.

This rule is specifically for plugins that contain or connect to AI in any way, either as an integral part of their functionality or an optional one. It’s not about plugins that are “vibed” or otherwise coded with AI assistance. This is because it is hard to detect and also for some people hard to know what is just smart autocomplete or classic code generation and what is AI, especially because the lines get blurred in some IDEs. We can also not review every plugin and check for signs of AI generated code. The forum rules don’t change in that regard and it is still encouraged to disclose the use of AI for coding help (like Copilot or the likes), so users who want to use the plugin can make an informed decision, and its good to see that users already did this by themselves even before we made it an explicit part of the forum rules.

Generally, one should always be aware that a plugin is not automatically more trustworthy because it is not created with the help of AI tools.

We are also aware that not every AI is created equally and that AI has become an umbrella term for a lot of already existing and useful tech that was around long before LLMs and image generators. There are tools out there that are called AI for (probably) marketing reasons despite barely qualifying. Exceptions may be granted for such tools if they are ethically sourced, local, small scaled, community driven and open source. The Krita Fast Line-Art tool can be seen as an example for this.

Currently only one public topic is affected by this rule, which is the Smart Segments plugin. A few hidden topics are currently in review since we have this discussion among the moderators and staff, those will be deleted too.

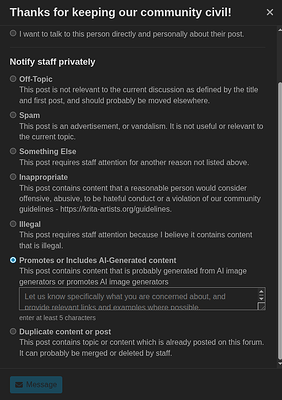

The rule is not limited to the Resources > Plugins and Scripts category but applies also to feature requests that ask for implementing AI or similar questions about how to do it. Users were always quick with flagging these topics in the past already.